I didnt say you can't run locally, the opposite.

Now we also need to pay attention to details. LLM = Large Language Model and that refers ONLY to AI that you chat with (text to text), like chat gpt, Gemini, etc. That does not include voice generation or natural voice models. or video generation & processing or image generation & processing (text to speech, text to video, text to image for short) as the OP asked.

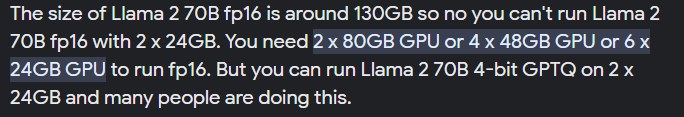

Again tho you may have forgotten that the SIZE of said models is the limiting factor and not the software itself which I also noted above. Dont take my word for it, see one of the best LLMs llama 2 (and wait till you see the images) asks for its full potential:

Here the GB requirements are for the graphic cards... so you need 6 X 3090s to run it at 16bit or 2 x 3090s but at 4 bit. Of course, other versions and other models need less VRAM, RAM, or CPU but still... not for the average user in general. Now note this is for PRETRAINED models, because the requirements to train (add your own data) is much much larger.

If you like do a search about the requirements to do AI for graphics, video, and audio, and you will see the requirements and difficulties to run one by yourself.

That is actually the opposite of what the OP asks. Dragon is for speech recognition, you talk and it writes text, while the OP asks for text to be converted to realistic human voice.